Upload Takes More Than 30 Seconds Aws

API Gateway supports a reasonable payload size limit of 10MB. One way to work within this limit, simply still offering a ways of importing large datasets to your backend, is to permit uploads through S3. This commodity shows how to use AWS Lambda to betrayal an S3 signed URL in response to an API Gateway asking. Effectively, this allows you to betrayal a machinery assuasive users to deeply upload data directly to S3, triggered by the API Gateway.

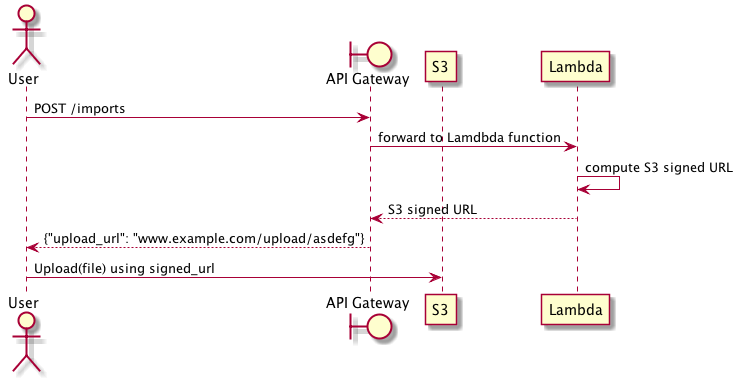

The basic flow of the import process is as follows: the user makes an API, which is served past API Gateway and backed by a Lambda function. The Lambda role computes a signed URL granting upload access to an S3 bucket and returns that to API Gateway, and API Gateway forrard the signed URL back to the user. At this indicate, the user tin use the existing S3 API to upload files larger than 10MB.

Upload through S3 signed URL

In practice, implementing this idea requires several interconnected parts: an S3 bucket, a Lambda function, and the API Gateway. Let's walk through how to create a working upload system using these components.

First, we need an S3 saucepan for storing our data. All objects in S3 are private by default and only the object owner has permission to admission these objects. All the same, the object owner tin can optionally share objects with others past creating a pre-signed URL, using their own security credentials, to grant time-limited permission to upload or download the objects. We are going to take reward of this feature to allow users to upload objects to an otherwise private S3 bucket.

Second, we need a Lambda part that generates pre-signed URLs in response to user API requests. In this example, we will employ the Python AWS SDK to create our Lambda part.

Third, nosotros need to expose our Lambda function through API Gateway. This requires creating a basic API that proxies requests to and from Lambda. We will define this API using Swagger and import it to API Gateway to start serving requests.

The S3 Bucket

The S3 bucket can be created via the AWS user interface, the AWS control line utility, or through CloudFormation. The only requirement is that the bucket be set to allow read/write permission only for the AWS user that created the bucket. This is the default gear up of permissions for any new bucket.

For example, we can hands create a new S3 saucepan using AWS CLI by running the following command:

| |

The Lambda function

Given your bucket name, you can test you have everything setup correctly by generating a pre-signed URL. In the post-obit case, we use the Python AWS SDK (boto) to generate the signed URL and so use cURL to upload data using a PUT request.

| |

API Gateway expects responses to be returned as JSON, which corresponds to a Python dictionary. We tin convert this simple test program into a Lambda function usable by API Gateway past returning the signed url as a JSON payload.

| |

Finally, nosotros zip this function and upload it to AWS as a new Lambda role. Assuming your function is named url_signer.py,

$ zip -r UrlSigner.zip url_signer.py Now, we can create the lambda office. Caution must be taken here: the IAM role assigned to the Lambda part must have read/write access to S3 so that information technology can create the signed URLs, and it must exist assumable past Lambda.

This implies the role must have the post-obit policy granting admission to S3.

| |

And the following trust human relationship, which makes the role assumable by Lambda functions.

{ "Version": "2012-ten-17", "Statement": [ { "Effect": "Permit", "Master": { "Service": "lambda.amazonaws.com" }, "Activity": "sts:AssumeRole" } ] } With this in place, you can create your Lambda function:

aws lambda create-function \ --region us-east-1 \ --function-name urlsigner \ --aught-file fileb:///<path-to-cipher>/UrlSigner.zip \ --handler url_signer.lambda_handler \ --runtime python2.7 \ --role <your-arn> API Gateway

At this point, nosotros take an S3 bucket, and a Lambda function that creates signed URLs for uploading to that bucket. The terminal pace is creating the API Gateway frontend that calls the Lambda function. For API Gateway to invoke a Lambda office, y'all must attach a role assumable by API Gateway that has permission to call Lambda'south InvokeFunction action.

This ways you must have a role capable of being assumed by API Gateway with the following trust relationship:

| |

And this function must exist able to invoke Lambda functions using the following policy:

| |

Now we tin define the final API. It is fairly straightforward: we define a single API endpoint that integrates with the Lambda function we previously created, and that has the correct office for executing the Lambda function. This API returns the signed URL for uploading directly to S3.

| |

Yous can apply the AWS user interface to create a new API using this definition, and deploying it as an API stage.

The Terminal Outcome

Now you should exist able to make a Mail service request to your import endpoint and be returned a signed URL:

| |

You lot tin utilize the signed URL to upload information directly to S3.

Come across also

- The Fake Dichotomy of Pattern-First and Lawmaking-Showtime API Development

- The Cathedral, The Bazaar, and the API Marketplace

- Marrying RESTful HTTP with Asynchronous and Outcome-Driven Services

- Overambitious API gateways

- Comparing Swagger with Austerity or gRPC

campanellicanalountes.blogspot.com

Source: https://sookocheff.com/post/api/uploading-large-payloads-through-api-gateway/

Post a Comment for "Upload Takes More Than 30 Seconds Aws"